AI Strategy and Governance

Why Every Security Leader Needs to Care Right Now

2/26/2026

AI Strategy and Governance: Why Every Security Leader Needs to Care Right Now

By CyberMentor365 | February 2026

Let me be honest with you. A year ago, most CISOs I spoke with treated AI governance like a "nice to have." Something for the compliance team to worry about later. Fast-forward to today, and the conversation has completely flipped. Regulations are landing. Boards are asking tough questions. And if you're in cybersecurity leadership — whether full-time or advisory — AI governance is no longer someone else's problem. It's yours.

So let's break it down. No jargon soup. No copy-paste frameworks. Just what you actually need to know, and more importantly, what you need to do.

What Are We Actually Talking About?

Let's get the definitions out of the way because people mix these up constantly.

AI Strategy is your game plan. It answers: Where does AI fit in our business? What problems are we solving with it? How much risk are we willing to take? Think of it as the "why" and "what" behind AI adoption.

AI Governance is the guardrails. It's the policies, roles, processes, and controls that keep AI ethical, secure, explainable, and compliant. It's the "how" — how you ensure AI doesn't go sideways once it's live.

Here's the thing most people miss: you can't have one without the other. Strategy without governance is reckless. Governance without strategy is just bureaucracy. The organizations getting this right in 2026 treat governance as an enabler, not a roadblock.

The Regulatory Clock Is Ticking

If you're reading this from the UAE, pay attention — things are moving fast here.

The UAE AI Landscape

The UAE doesn't have one single AI law yet, but it's built a whole ecosystem around it. The UAE Charter for AI (launched June 2024) laid out twelve guiding principles around safety, privacy, transparency, and human well-being. Then came the Artificial Intelligence and Advanced Technology Authority (AIATA) under Federal Law No. 3 of 2024, tasked with formulating AI strategies and monitoring compliance across the country.

And here's the big one: Dubai and the broader UAE are enforcing a risk-based AI regulatory framework starting March 2026. Four risk tiers — minimal, limited, high, and critical — with penalties reaching up to AED 10 million for prohibited AI deployments. If that sounds familiar, it's because the structure mirrors the EU AI Act but is tailored to UAE priorities like smart cities, healthcare, and finance.

The EU AI Act Timeline

Even if you're not operating in Europe, the EU AI Act sets the global benchmark. Here are the dates that matter:

· February 2025 — Banned AI practices are now prohibited; AI literacy is mandatory.

· August 2025 — General-purpose AI models must meet transparency and documentation obligations.

· August 2026 — The big one. High-risk AI requirements become enforceable. This covers AI used in hiring, credit scoring, education, and law enforcement.

· August 2027 — Extended requirements for high-risk AI under Annex I take effect.

If your organization builds or deploys AI that touches any of those categories, August 2026 is your real deadline. Don't wait for it.

Why This Lands on the CISO's Desk

Here's a question I get a lot: "Isn't AI governance the job of the Chief Data Officer or the AI team?"

Short answer: partially. But the security angle? That's squarely on you.

Think about it. AI systems consume massive amounts of sensitive data. They make decisions that affect people's lives — hiring, lending, healthcare. They introduce entirely new attack surfaces: data poisoning, model evasion, adversarial manipulation, prompt injection. These aren't theoretical risks. They're happening now.

CISOs already manage risk registers, run compliance programs, handle data protection, and work with third-party vendors. AI governance is a natural extension of what you already do. You're not starting from scratch — you're expanding.

Here's what the CISO's AI governance role looks like in practice:

· Threat modeling for AI — Understanding attack vectors like data poisoning, model theft, and inference attacks

· AI risk in the enterprise risk register — Treating AI risk alongside traditional cyber risk, not in a separate silo

· Acceptable Use Policies — Defining what employees can and cannot do with AI tools (more on this below)

· Third-party AI assessments — Evaluating vendor AI systems for security and compliance gaps

· Cross-functional leadership — Sitting at the governance table alongside legal, compliance, data science, and business

Whether you're a sitting CISO or offering vCISO services, this is a differentiator. Clients and employers increasingly want security leaders who speak AI governance fluently.

The Two Frameworks You Need to Know

You don't need to memorize every framework out there, but two deserve your full attention.

1. NIST AI Risk Management Framework (AI RMF)

NIST structured their framework around four functions:

· Govern — Set up the organizational foundations: policies, roles, accountability, and leadership buy-in

· Map — Identify and understand the context: data flows, stakeholders, dependencies, and potential impacts

· Measure — Monitor and evaluate: performance metrics, bias indicators, model drift, trustworthiness

· Manage — Respond and mitigate: prioritize risks, oversee third parties, continuously improve

It's practical, flexible, and especially useful for organizations that already align with NIST CSF for cybersecurity. If you're a NIST shop, this is a natural extension.

2. ISO/IEC 42001: The AI Management System

ISO 42001 is the world's first international standard specifically for AI management systems. It specifies requirements for establishing, implementing, maintaining, and improving an AI management system (AIMS).

Here's what makes it powerful for security professionals: it maps directly to ISO 27001. If your organization is already certified for information security, you can extend your existing management system to cover AI governance. Same structure. Same audit rhythm. Same continuous improvement cycle. You're not rebuilding — you're adding a layer.

The smartest approach? Use both. ISO 42001 as your foundational compliance framework, and NIST AI RMF as a dynamic, risk-responsive overlay that keeps your governance adaptive.

Building Your AI Governance Program: A Practical Roadmap

Enough theory. Let's talk about what to actually do. Here's a phased approach that works for organizations of all sizes.

Phase 1: Lay the Foundation (Weeks 1–4)

Get executive sponsorship. This won't work without a mandate from the top. AI governance needs a seat at the leadership table — not buried in IT.

Build your AI inventory. You'd be surprised how many organizations can't answer a simple question: "How many AI systems are we running right now?" Document every AI tool in use. Who owns it. What data it touches. What decisions it influences. What risk category it falls into.

Form a cross-functional governance committee. This isn't just a security thing. You need legal, compliance, data science, operations, and business leadership around the table.

Phase 2: Policies and Processes (Weeks 5–8)

Write your AI Acceptable Use Policy. This is the single most impactful document you can produce right now. It should cover:

· Scope — Everyone. Employees, contractors, vendors.

· Data classification for AI — What data can go into which tools. Public data? Any tool. Internal data? Approved enterprise tools only. Sensitive or regulated data? Explicit approval required.

· Approved tools — Name them. If it's not on the list, it's not approved.

· Prohibited uses — No processing regulated data without approval. No autonomous decisions without human review. No passing off AI outputs as human work without disclosure.

· Accountability — Employees own responsibility for AI outputs they use.

· Review cadence — Quarterly minimum. AI moves too fast for annual reviews.

Create an intake process for new AI use cases. Every new AI initiative should go through a lightweight assessment: What's the purpose? What data is involved? Who's affected? What could go wrong?

Phase 3: Risk Assessment and Controls (Weeks 9–12)

Run a gap analysis against ISO 42001 and NIST AI RMF. Where are you today? Where do you need to be?

Implement operational controls:

· Model documentation and version control

· Dataset lineage tracking

· Bias testing and fairness evaluation

· Continuous monitoring for drift and performance degradation

· AI-specific incident response procedures

· Human-in-the-loop controls for high-risk decisions

Connect AI governance to your existing processes. Link it to procurement, security assessments, privacy impact assessments, and your software development lifecycle. Don't create a parallel universe — integrate.

Phase 4: Operationalize and Scale (Ongoing)

Automate where you can. Governance-as-code — automated compliance checks, evidence capture, monitoring dashboards — makes this sustainable. Manual governance doesn't scale.

Establish metrics. Track what matters: number of AI systems inventoried, percentage with completed risk assessments, policy compliance rates, incident response times for AI-related events.

Plan for what's coming. Agentic AI — systems that act autonomously — is the next frontier. Your governance framework needs to anticipate emergent behaviors, autonomous decision chains, and real-time intervention capabilities.

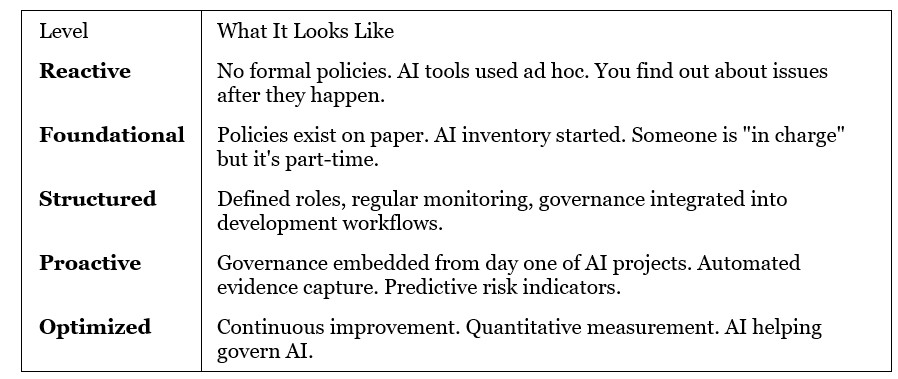

Where Do You Stand? A Quick Maturity Check

Be honest with yourself. Where does your organization sit?

Most organizations today sit somewhere between Reactive and Foundational. That's not a criticism — it's a starting point. The goal is to move deliberately, not skip levels. Skipping levels creates gaps that bite you later.

My Take

I've spent over two decades in cybersecurity, and I've seen this pattern before. First, cloud was "someone else's problem." Then it became everyone's problem overnight. AI governance is on the exact same trajectory — except the timeline is compressed.

The CISOs and security leaders who lean into this now won't just be managing risk. They'll be shaping how their organizations use one of the most transformative technologies in history. That's not a compliance exercise. That's leadership.

Whether you're a full-time CISO at a mid-market company, a vCISO advising SMBs, or an aspiring security leader building your career — make AI governance your next skill. Learn the frameworks. Draft the policies. Lead the conversation.

The organizations that get governance right will innovate faster, not slower. And the security leaders who drive that conversation? They'll be the ones everyone wants in the room.

What's your take? Have you started building an AI governance program at your organization? Drop a comment or reach out — I'd love to hear how you're tackling this.

Follow CyberMentor365 for more practical cybersecurity and AI insights. New content every week.